ETL Pipeline

In this project we create a ETL pipeline.

In this project we create a ETL pipeline.

The system will use Kafka to stream data from a variety of sources, such as credit card transactions and social media posts. Spark will then be used to process the data and identify any fraudulent activity.

This project builds a robust ETL (Extract, Transform, Load) process using SQL. Let’s dive into the emotions behind the scenes as we engineer a data pipeline to handle this crucial task.

Spark data engineering project centers around unraveling the enigmatic secrets concealed within the vast volumes of customer data. Prepare to witness the transformative power of Spark as it reveals the hidden truths of customer behavior.

This project walks through a real-world Spark data engineering project. It usses Spark to extract data from a CSV file, clean and transform the data, and load it into a MySQL database.

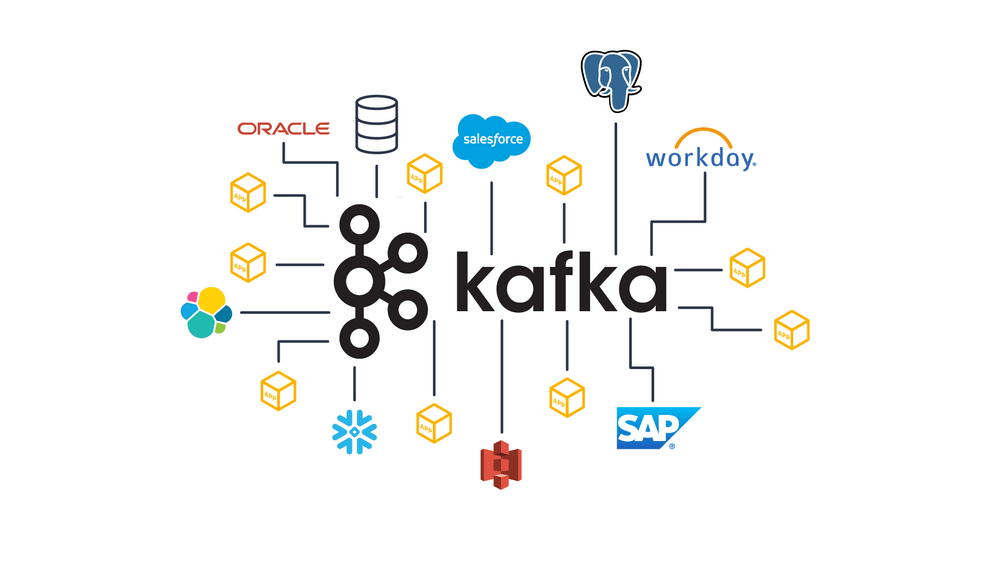

In this article, we will dive deep into the concept of a Kafka pipeline, exploring its significance, architecture, and implementation.

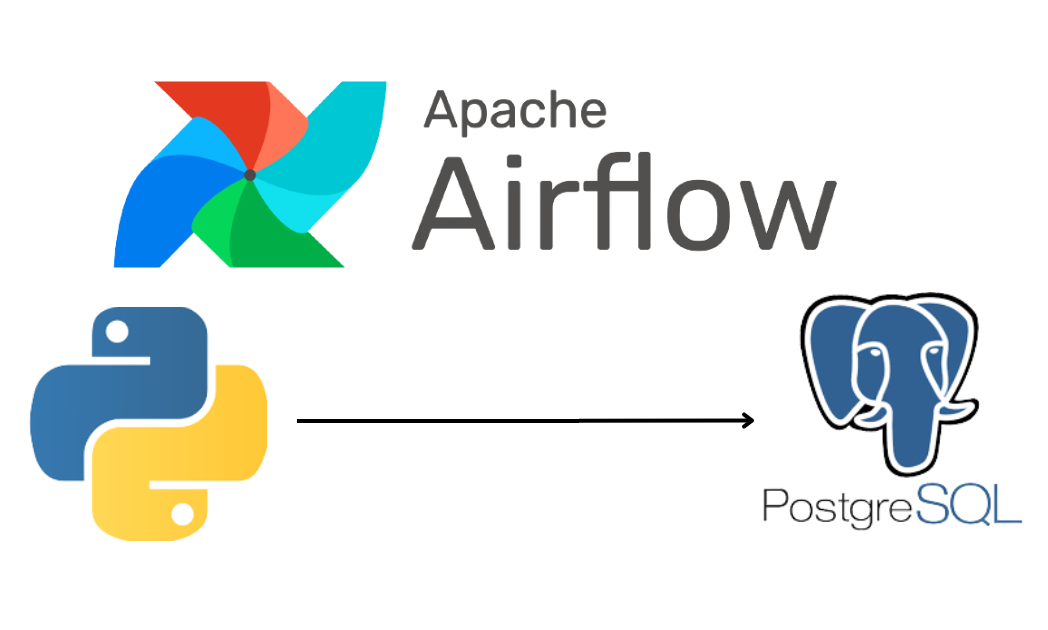

This project shows how to create an ETL pipeline using Airflow to extract weather data from a CSV file, transform it, and load it into a PostgreSQL database.

This project applies Regression techniques to predict the presence of diabetes in a person, and compares the classification accuracies of each model.

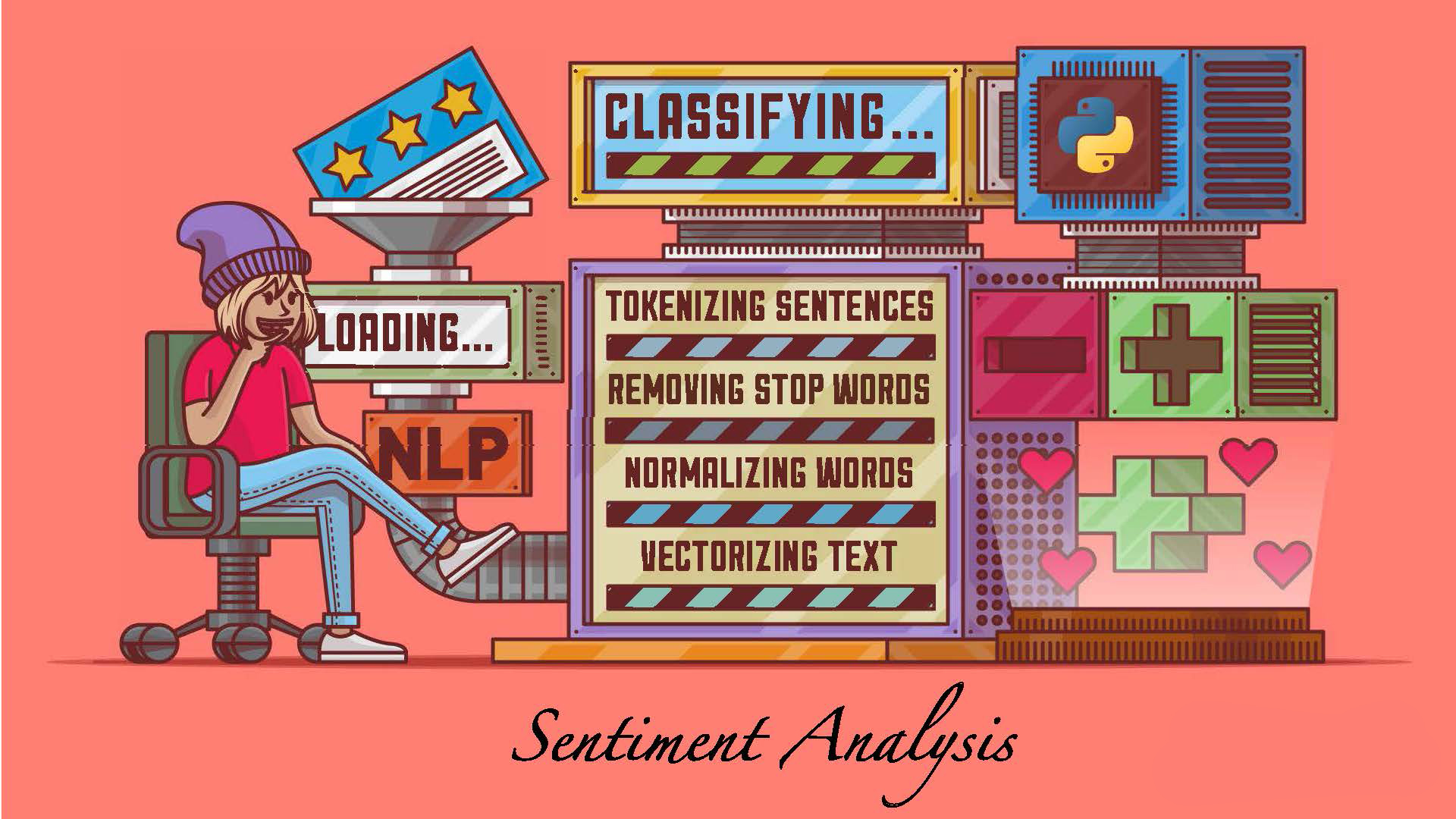

This project utilizes sentiment analysis techniques to detect the genre of a book, enabling efficient classification based on the analysis of textual reviews and feedback.

A comparative analysis of Keras on TensorFlow and PyTorch.

In this project, we implement and compare various machine learning models for the detection of online payment fraud, aiming to identify the most effective solution through comparative analysis.

This project demonstrates the development of a Flask CRUD application utilizing the Psycopg2 library, enabling efficient management of data through a user-friendly interface.

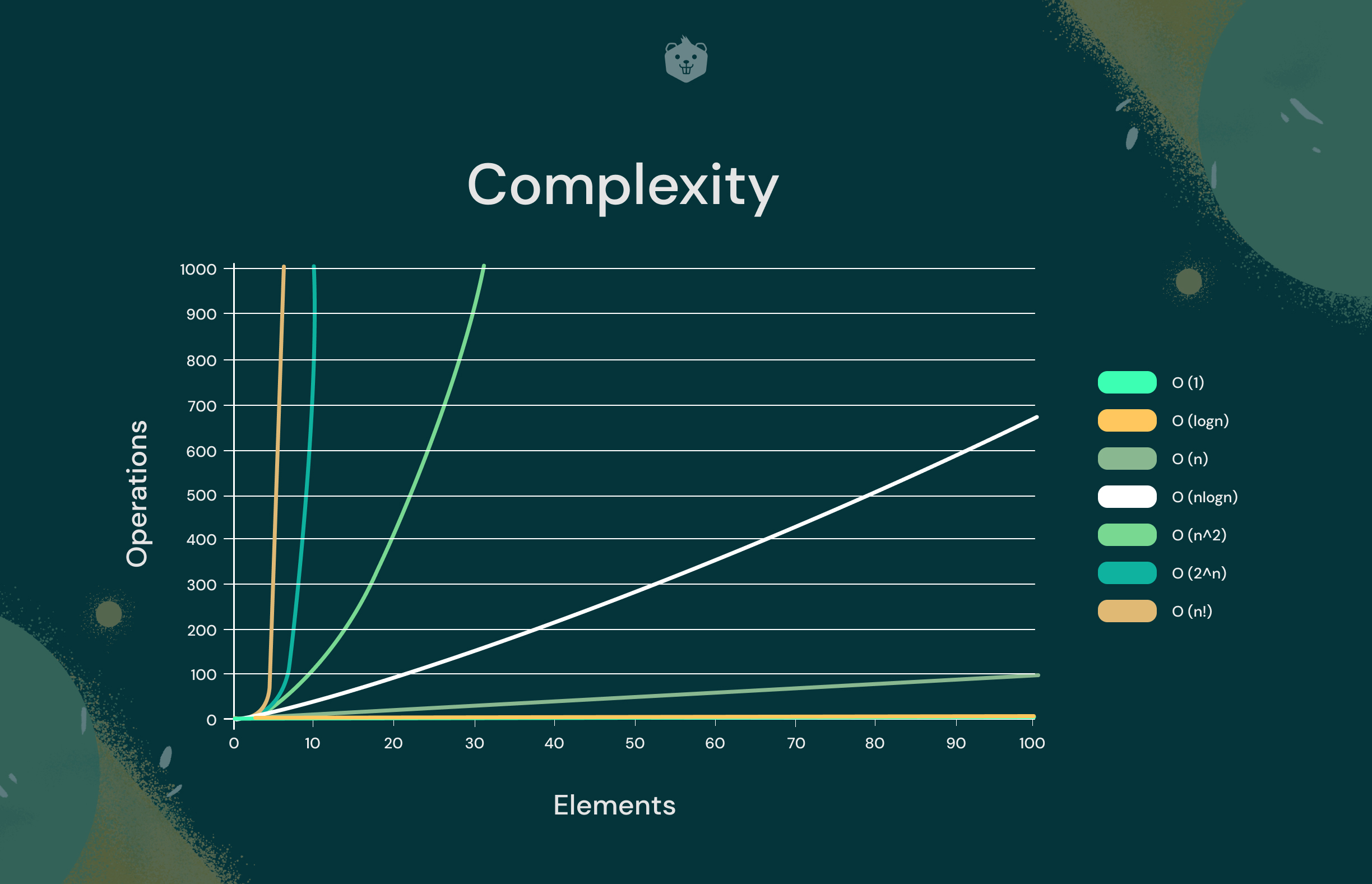

This project aims to implement and compare the running complexity of various data search algorithms, providing insights into their efficiency and performance in different scenarios.